When AI Gets It Wrong, Your Customer Doesn’t Come Back

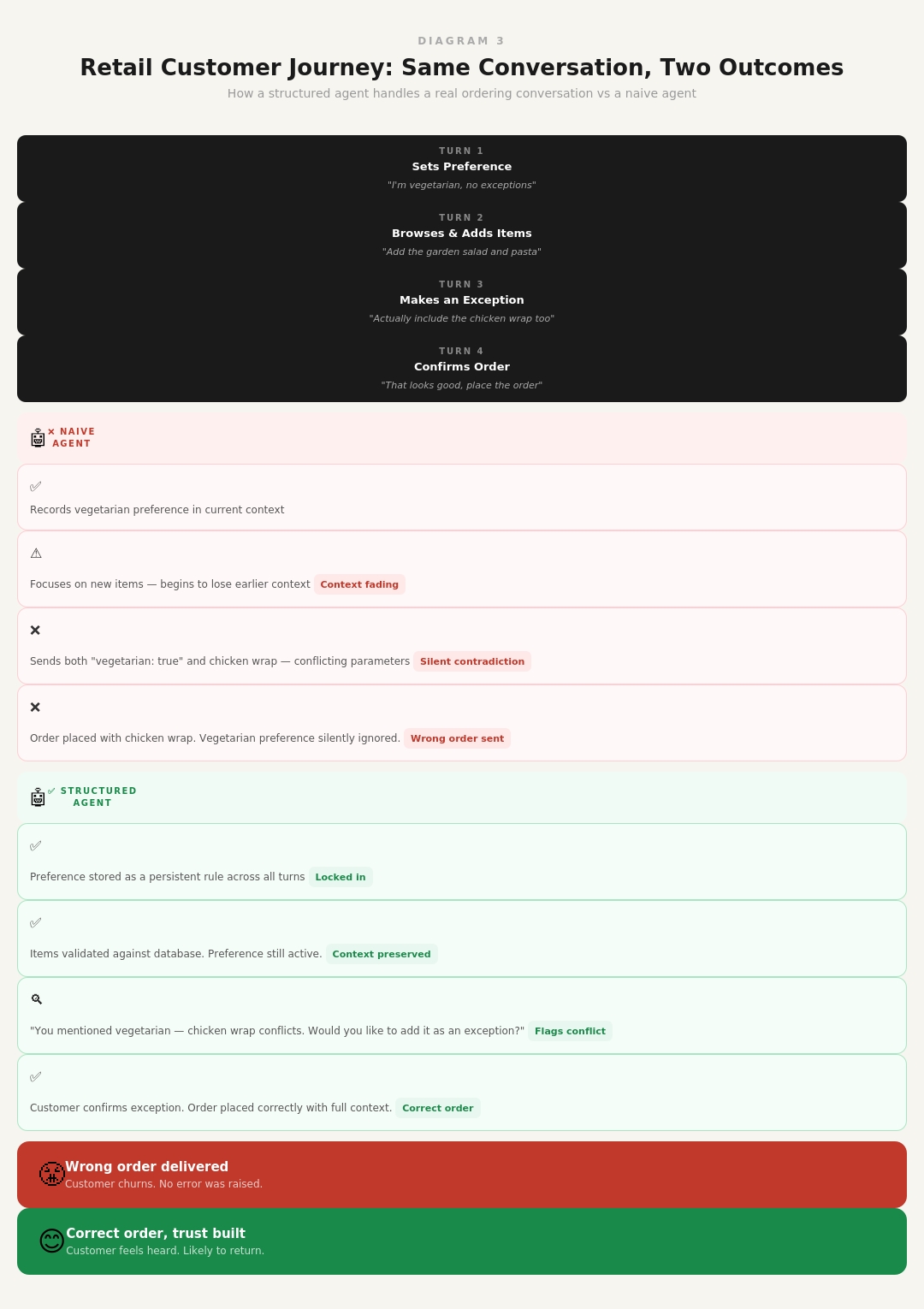

A customer opens your retail app and starts chatting with your AI ordering assistant. They mention upfront — “I’m vegetarian, no exceptions.” A few messages later, they’re browsing, adding items, refining their order. The conversation flows naturally.

Then their order arrives. It contains meat.

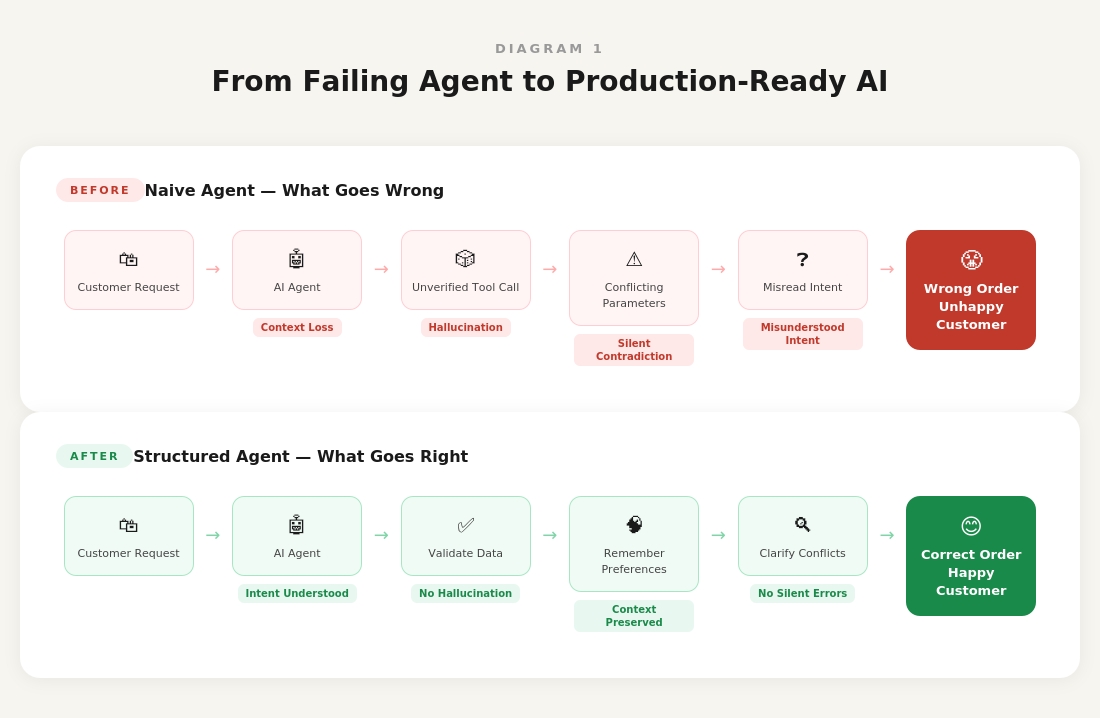

The AI remembered their last item selection. It forgot their dietary restriction. No error was raised. No warning was shown. The system worked exactly as designed — and still got it completely wrong.

That customer doesn’t call support. They don’t complain. They just don’t come back.

This is not a hypothetical. It is a pattern 1CloudHub observed repeatedly while building a production agentic AI system for a retail client. It is also one of the most dangerous failure modes in retail AI — not because it is dramatic, but because it is invisible. It does not show up in your error logs. It shows up in your retention numbers, three months later, when you cannot explain why repeat orders have declined.